In recent years, deep learning methods based on neural networks have made considerable progress in the field of natural language processing. As the basic task in the NLP field, Named Entity Recognition (NER) is no exception. The neural network structure has also achieved good results in NER. Recently, the author of this article has also read and studied a series of related papers using the neural network structure for NER. Here, I will summarize it and share it with everyone.

1 Introduction

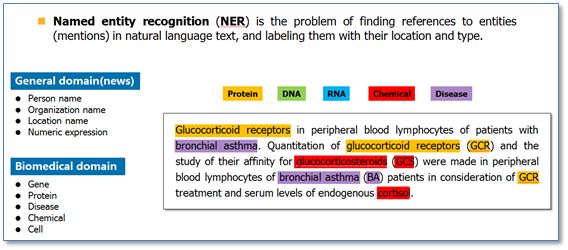

Named Entity Recognition (NER) is to find out the related entities from a natural language text, and mark its location and type, as shown below. It is the basis for some complex tasks in the NLP field (such as relationship extraction, information retrieval, etc.).

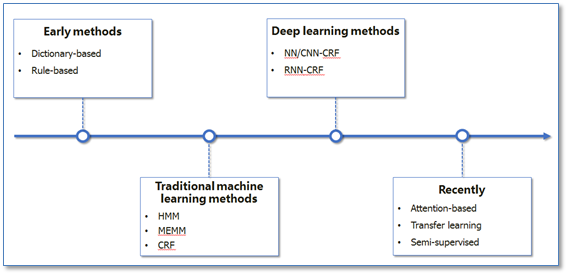

NER has always been a research hotspot in the field of NLP. From the early methods based on lexicon and rules, to the methods of traditional machine learning, to the methods based on deep learning in recent years, the general trend of NER research is roughly as shown in the following figure.

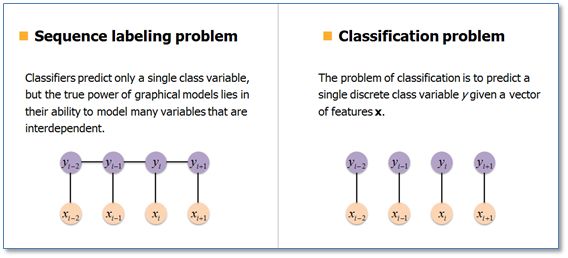

In machine learning-based methods, NER is considered a sequence labeling problem. Compared with the classification problem, the current prediction label in the sequence labeling problem is not only related to the current input feature, but also related to the previous prediction label, that is, there is a strong interdependence between the predicted label sequences. For example, when using the BIO tag policy for NER, the tag O in the correct tag sequence will not be tagged with tag I.

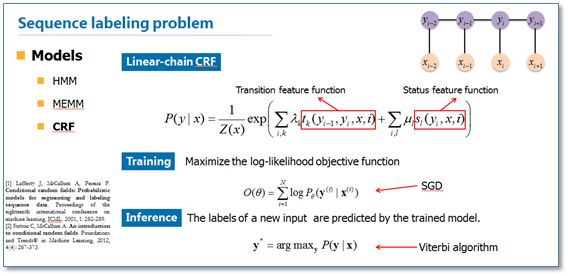

In traditional machine learning, the Conditional Random Field (CRF) is the current mainstream model of NER. Its objective function not only considers the state feature function of the input, but also includes the tag transfer feature function. Model parameters can be learned using SGD during training. When the model is known, it is a dynamic programming problem to obtain the predicted output sequence of the input sequence, that is, to optimize the objective function. It can be decoded using the Viterbi algorithm.

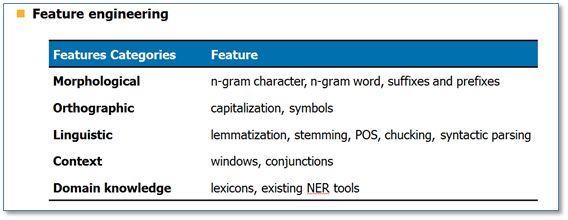

In the traditional machine learning method, the commonly used features are as follows:

Next, we will focus on how to use the neural network structure to perform NER.

2 NER mainstream neural network structure

2.1 NN/CNN-CRF model

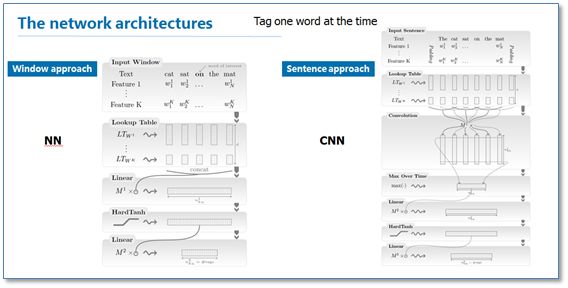

"Natural language processing (almost) from scratch" is one of the early work of using NER for neural networks. In this paper, the author proposes two network structures, the window method and the sentence method, to perform NER. The main difference between the two structures is that the window method only uses the context window of the current predictive word to input, and then uses the traditional NN structure; while the sentence method uses the entire sentence as the input of the current predictive word, adding the relative position feature in the sentence. To distinguish each word in the sentence, and then use a layer of convolutional neural network CNN structure.

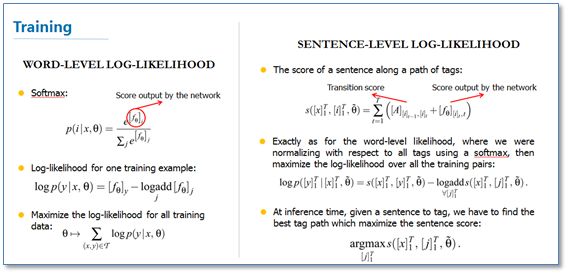

In the training phase, the author also gives two objective functions: one is the log-like log likelihood, that is, using softmax to predict the label probability, which is a traditional classification problem; the other is the sentence-level logarithm However, in fact, considering the advantages of the CRF model in the sequence labeling problem, the label transfer score is added to the objective function. Later, many related work called this idea a layer of CRF, so I called it the NN/CNN-CRF model.

In the author's experiment, the above-mentioned NN and CNN structure effects are basically the same, but the sentence-level likelihood function is added to the CRF layer and the effect of NER is obviously improved.

2.2 RNN-CRF model

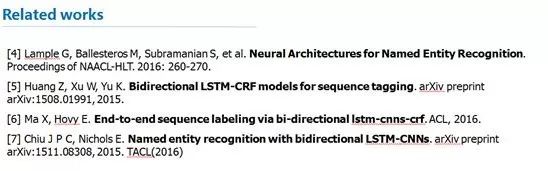

Drawing on the above CRF ideas, a series of work using the RNN structure and combining the CRF layer for NER appeared around 2015. Representative work mainly includes:  To sum up these work is an RNN-CRF model, the model structure is as follows:

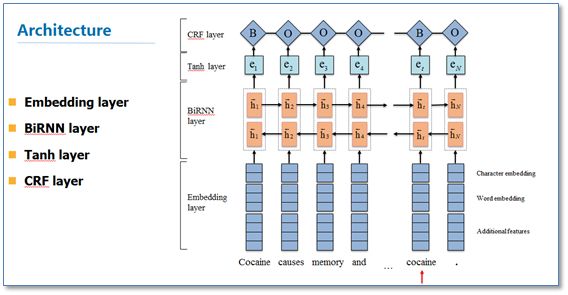

To sum up these work is an RNN-CRF model, the model structure is as follows:

It mainly consists of the Embedding layer (mainly with word vectors, character vectors and some additional features), the bidirectional RNN layer, the tanh hidden layer and the final CRF layer. The main difference between it and the previous NN/CNN-CRF is that he uses a two-way RNN instead of NN/CNN. Here RNN is commonly used for LSTM or GRU. The experimental results show that RNN-CRF has achieved better results, and has reached or exceeded the CRF model based on rich features, and has become the most mainstream model in the current NER method based on deep learning. In terms of features, the model inherits the advantages of deep learning methods. Without feature engineering, word vectors and character vectors can be used to achieve good results. If there are high-quality dictionary features, they can be further improved.

3 Some recent work

In the most recent year, NER research based on neural network structure mainly focused on two aspects: one is to use the popular attention mechanism to improve the Attention Mechanism, and the other is to conduct some research on a small number of labeled training data.

3.1 Attention-based

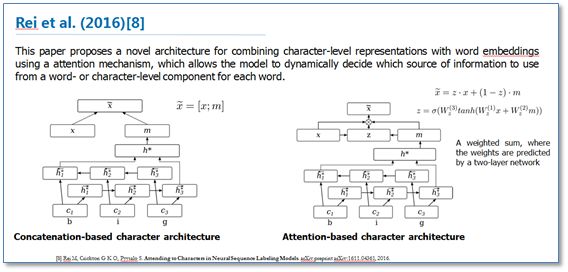

"Attending to Characters in Neural Sequence Labeling Models" is based on the RNN-CRF model structure, focusing on improving the splicing of word vectors and character vectors. Using the attention mechanism to improve the original character vector and word vector splicing for weight summation, the two layers of traditional neural network hidden layer are used to learn the weight of the attention, so that the model can dynamically use the word vector and character vector information. The experimental results show that the effect is better than the original splicing method.

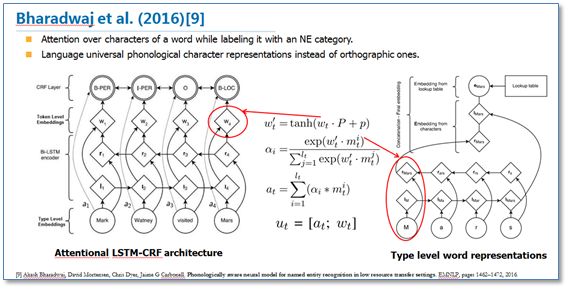

Another paper, "Phonologically aware neural model for named entity recognition in low resource transfer settings", adds phonological features to the original BiLSTM-CRF model and uses the attention mechanism on the character vector to learn to focus on more efficient characters. Improve the picture below.

3.2 A small amount of data

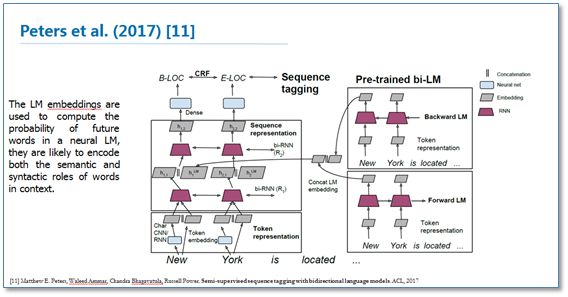

For the deep learning method, a large amount of data is generally required to be annotated, but there is no huge amount of annotation data in some fields. Therefore, how to use a small amount of annotated data for NER based on the neural network structure method is also the focus of recent research. This includes the migration learning "Transfer Learning for Sequence Tagging with Hierarchical Recurrent Networks" and semi-supervised learning. Here I mention a recent paper "Semi-supervised sequence tagging with bidirectional language models" recently adopted by ACL2017. The paper uses a massive unlabeled corpus to train a two-way neural network language model, and then uses this trained language model to obtain the language model vector (LM embedding) of the current word to be annotated, and then adds the vector as a feature to the original two-way. In the RNN-CRF model. The experimental results show that the addition of this language model vector can greatly improve the NER effect on a small amount of annotation data. Even in a large number of labeled training data, adding this language model vector can still provide the original RNN-CRF model. The overall model structure is as follows:

4 Summary

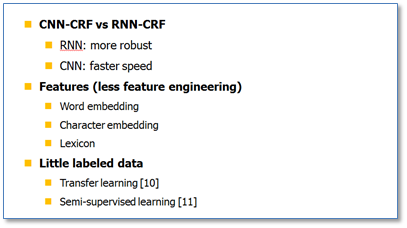

Finally, a summary is made. At present, the NN/CNN/RNN-CRF model combining neural network and CRF model has become the mainstream model of NER. I think that for CNN and RNN, no one has an absolute advantage, and each has its own advantages. Since RNN has a natural sequence structure, RNN-CRF is more widely used. The NER method based on the neural network structure inherits the advantages of the deep learning method and does not require a large number of artificial features. Only word vectors and character vectors can reach the mainstream level, and adding high-quality dictionary features can further enhance the effect. For a small number of labeled training sets, migration learning, semi-supervised learning should be the focus of future research.

ZGAR bar 600 Puffs

ZGAR electronic cigarette uses high-tech R&D, food grade disposable pod device and high-quality raw material. All package designs are Original IP. Our designer team is from Hong Kong. We have very high requirements for product quality, flavors taste and packaging design. The E-liquid is imported, materials are food grade, and assembly plant is medical-grade dust-free workshops.

Our products include disposable e-cigarettes, rechargeable e-cigarettes, rechargreable disposable vape pen, and various of flavors of cigarette cartridges. From 600puffs to 5000puffs, ZGAR bar Disposable offer high-tech R&D, E-cigarette improves battery capacity, We offer various of flavors and support customization. And printing designs can be customized. We have our own professional team and competitive quotations for any OEM or ODM works.

We supply OEM rechargeable disposable vape pen,OEM disposable electronic cigarette,ODM disposable vape pen,ODM disposable electronic cigarette,OEM/ODM vape pen e-cigarette,OEM/ODM atomizer device.

ZGAR bar 600 Puffs Disposable Vape, bar 600puffs,ZGAR bar 600 Puffs disposable,ZGAR bar 600 Puffs,ZGAR bar 600 Puffs OEM/ODM disposable vape pen atomizer Device E-cig

ZGAR INTERNATIONAL(HK)CO., LIMITED , https://www.zgarette.com